The Third Wave of CI/CD

For 25 years, CI/CD has been deterministic. Push code, run tests, pass or fail. No judgment calls. No creativity. No ambiguity.

That changed on February 5, 2026.

GitHub's R&D lab, GitHub Next, announced Continuous AI: a framework for running AI agents as background participants in your repository. Not AI that reviews your code in a PR comment. AI that writes code, opens PRs, responds to issues, and makes judgment calls, all triggered by the same events that trigger your CI pipeline today.

The concept: if CI/CD automates the things computers are good at (building, testing, deploying), Continuous AI automates the things that previously required a human sitting at a keyboard. Triage an issue. Draft a fix. Update documentation after an API change. Respond to a security advisory.

This is our take on it. We build custom software with these tools. We use Claude Code, GitHub Actions, and agentic workflows in client projects. This is not coverage from a news desk.

Continuous AI, Explained

Here is the distinction that matters:

- Traditional CI/CD: An event triggers a deterministic action. Push triggers build. Build triggers test. Test triggers deploy. Every step follows rules with no variation.

- Continuous AI: An event triggers an AI agent with natural language instructions. The agent has judgment. It can decide what to do, not just follow rules.

The mechanism is straightforward. A developer writes a .md workflow file with YAML frontmatter. The frontmatter defines triggers, permissions, safe-output paths, and which coding agent to use. The body contains natural language instructions. Then gh aw compile transforms that markdown into a standard GitHub Actions YAML workflow.

When the workflow triggers, a coding agent (Claude Code, OpenAI Codex, or GitHub Copilot) executes the instructions within the boundaries set by the safe-outputs security model.

The gh aw CLI

- MIT licensed, open source

- Written in Go, 380+ stars on GitHub

- Commands:

gh aw compile,gh aw run,gh aw list - Install:

gh extension install github/gh-aw

What a Workflow Looks Like

Here is a side-by-side comparison. On the left, the natural language workflow definition. On the right, what it compiles to:

---triggers: - issues: [opened, labeled:bug]permissions: contents: write pull-requests: writesafe-outputs: - "src/**/*.ts" - "src/**/*.test.ts"tools: - claude-code---

# Bug Fix Agent

When a new bug report is filed:

1. Read the issue description and any attached stack traces2. Search the codebase for the relevant files and functions3. Write a fix and add a test that reproduces the bug4. Open a pull request with a clear description of the changename: bug-fix-agenton: issues: types: [opened, labeled]

permissions: contents: write pull-requests: write

jobs: fix-bug: if: contains(github.event .label.name, 'bug') runs-on: ubuntu-latest steps: - uses: actions/checkout@v4 - uses: github/agentic-run@v1 with: agent: claude-code safe-outputs: | src/**/*.ts src/**/*.test.ts instructions: | Read the issue, find the relevant code, write a fix with tests, open a PR.The left side is what you write. The right side is what runs. The markdown file is human-readable and reviewable. The compiled YAML plugs directly into GitHub Actions.

25 Years of CI/CD, and Why This Is Different

To understand why Continuous AI matters, look at what came before it. Every previous evolution was about automating deterministic tasks faster. Continuous AI is the first to automate tasks that require judgment.

Extreme Programming

Kent Beck introduces CI as a development practice

CruiseControl

First open-source CI server automates builds

Hudson / Jenkins

CI becomes mainstream with plugin ecosystems

Travis CI

CI moves to the cloud, config-as-code (.yml)

GitHub Actions

CI/CD integrated directly into source control

GitOps

Infrastructure as code, policy as code

AI-Assisted CI

Copilot suggestions in PRs, AI code review

Continuous AI

AI agents as first-class CI participants

Every prior evolution automated deterministic tasks faster. Continuous AI is the first to automate tasks requiring judgment.

When Kent Beck and Ron Jeffries introduced continuous integration as an Extreme Programming practice in 1999, the idea was radical: merge code frequently instead of waiting for a "big bang" integration phase. CruiseControl (2001) automated the idea. Jenkins made it mainstream. Travis CI moved it to the cloud. GitHub Actions embedded it into source control.

Each step automated a mechanical task. Build faster. Test faster. Deploy faster. But the tasks themselves stayed deterministic. A build either passes or fails. A test either succeeds or errors. There is no gray area.

Continuous AI introduces gray area on purpose. "Analyze this bug report" is not a pass/fail operation. "Update the docs to reflect this API change" requires reading comprehension. "Draft a fix for this stack trace" requires judgment about which approach is best.

"Traditional CI automates what computers are good at. Continuous AI automates what used to require a human at a keyboard."

Writing Your First Agentic Workflow

The workflow file has two parts: YAML frontmatter for configuration, and a markdown body for natural language instructions.

Frontmatter Options

| Field | Purpose | Example |

|---|---|---|

| triggers | Repository events that start the agent | issues: [opened, labeled:bug] |

| permissions | GitHub API permissions the agent receives | contents: write, pull-requests: write |

| safe-outputs | File paths the agent is allowed to modify | src/**/*.ts, docs/**/*.md |

| tools | Which coding agent to use | claude-code, codex, copilot |

Common Use Cases

These are the patterns we see as most immediately useful:

- Bug triage agent: Analyze new issues, read the stack trace, label by severity, assign to the right team. No human sorting through a backlog every morning.

- Documentation updater: When API routes change, the agent reads the diff and updates the corresponding docs. Documentation stays current without anyone remembering to do it.

- Dependency updater: When a security advisory drops, the agent drafts a PR to bump the affected package. Security patches stop waiting in a queue.

- Code reviewer: Not just linting. Contextual review that understands business logic and flags concerns a linter would miss.

The Security Model

This is the part that determines whether Continuous AI is practical or just a demo. The safe-outputs field restricts which files and directories the agent can modify. An agent with safe-outputs: ["docs/**/*.md"] can only write to markdown files in the docs directory. It cannot touch source code, configuration files, or CI pipelines.

Every agent output is submitted as a pull request. Agents cannot merge their own PRs. The permissions model mirrors GitHub Actions permissions, so the same security review process you use for Actions applies to agentic workflows.

The Paradox: AI Makes Developers Feel Faster While Making Them Slower

Before we talk about what Continuous AI changes, we need to talk about what current AI tools have not fixed.

The Developer AI Paradox

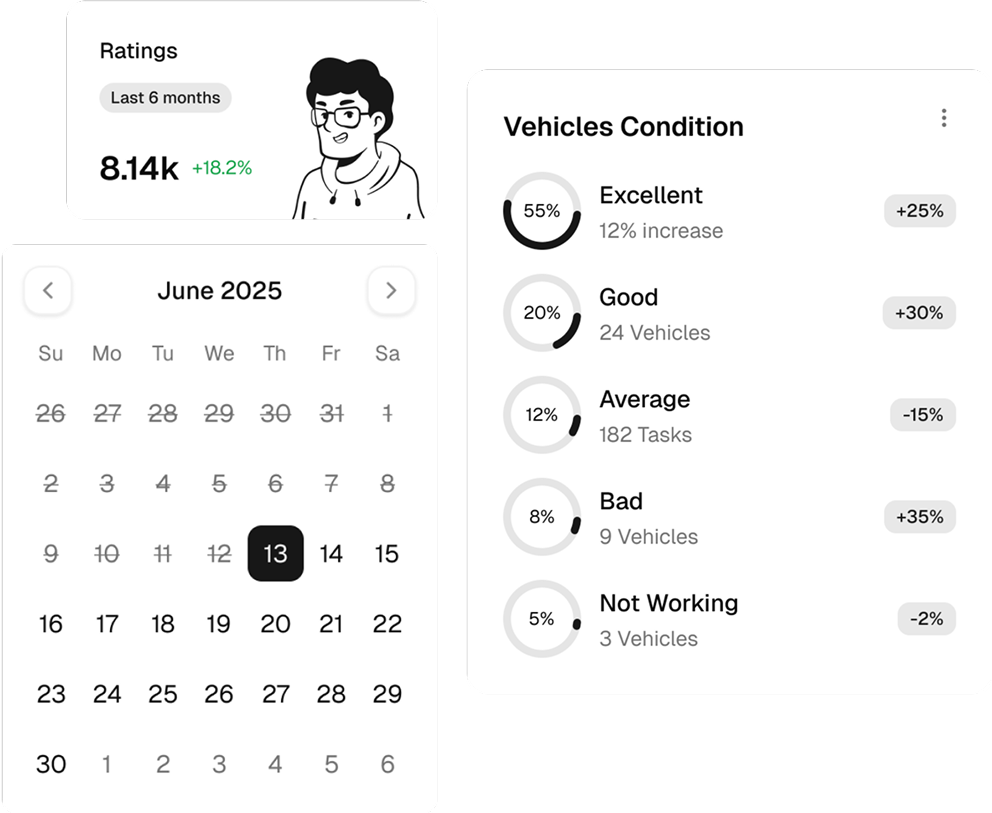

84%

of developers use AI tools

41%

of code is AI-generated

46%

distrust AI outputs

Perceived Speed

+20%

faster (self-reported)

Measured Speed

-19%

slower (controlled study)

Sources: index.dev Developer Nation Survey 2026, METR controlled productivity study. 66% of developers report encountering "almost right" AI solutions requiring manual correction.

The data is uncomfortable. 84% of developers use AI tools, and 41% of production code is now AI-generated. But controlled studies from METR show developers using AI assistants were actually 19% slower on tasks, despite reporting they felt 20% faster.

The explanation is not complicated. Current AI coding tools are synchronous: you prompt, you wait, you review the output, you decide what to keep. That review loop interrupts your flow. When the output is "almost right" (which 66% of developers say happens regularly), fixing it often takes longer than writing it from scratch.

Why Continuous AI Sidesteps the Paradox

Continuous AI is fundamentally different because it is asynchronous. The AI works in the background, triggered by repository events. You do not prompt it. You do not wait for it. You review its output the same way you review any PR, a workflow where developers already have discipline and muscle memory.

Instead of context-switching to interact with an AI assistant, the AI operates independently and presents finished work for review. This maps to what Microsoft Research calls the SPACE framework for developer productivity: it improves Efficiency (less time on routine work) and Activity (more PRs completed) without degrading Satisfaction or Communication, because the human review step remains unchanged.

Whether background agents produce the same code churn issues that GitClear's research has flagged with synchronous AI coding remains to be seen. But the model (agent proposes, human reviews) addresses the biggest failure mode of current tools: unreviewed AI output shipping to production.

Building with AI tools? Get a free 15-min strategy call.

Custom roadmap + 3 quick wins you can use this week.

Three Things This Changes for Engineering Teams

1. The On-Call Rotation

Agents handle first-response to issues. When a bug report comes in, the agent reads the stack trace, identifies the relevant code, and drafts a fix before a human even sees the notification. The on-call engineer becomes a reviewer, not the first responder.

This does not replace on-call. Complex incidents still require human judgment. But the volume of straightforward bug fixes that interrupt deep work goes down.

2. The PR Backlog

Agents generate PRs for routine work: dependency updates, documentation syncs, test additions. Humans focus on architectural decisions and business logic. The PR backlog shifts from "work waiting for someone to do it" to "work waiting for someone to review it."

This flips the bottleneck. Most teams are blocked on writing code. With Continuous AI, the bottleneck moves to reviewing code, which is a faster activity and one where domain expertise matters more.

3. The Junior Developer Question

If agents handle routine tasks, what does the junior developer do? How do they learn? This is the hardest question Continuous AI raises, and it does not have a clean answer yet.

One perspective: junior developers shift from writing routine code to reviewing agent output, which is actually a faster path to understanding a codebase. Reading and evaluating code is how senior developers spend most of their time anyway.

Another perspective: removing the "struggle phase" of writing basic code stunts growth. The industry has not answered this yet, and anyone who claims to have is guessing.

Microsoft's Julia Kordick framed it well: "Agents explore, correlate, propose; humans decide, review, take responsibility." That division of labor may hold, but how humans develop the judgment to "decide, review, and take responsibility" without first doing the exploration themselves is an open question.

Should You Use Continuous AI Today?

Ready for Production

- ✓Issue triage and labeling

- ✓Documentation updates

- ✓Dependency bumps

- ✓Test generation for existing code

- ✓Changelog and release note drafting

Not Ready Yet

- ✗Complex multi-file refactors

- ✗Architectural decisions

- ✗Domain-specific business logic

- ✗Security-critical code paths

- ✗Performance-sensitive code

Our Recommendation

We use these tools daily. Here is how we would approach it:

- Start with read-only agents. Analysis, labeling, reporting. No write access to source code.

- Graduate to write agents for low-risk paths. Documentation, tests, dependency bumps. Things where a bad PR is easy to spot and costs nothing.

- Keep architectural work human-driven. Agents do not understand your business constraints, your team's velocity, or the political dynamics of your codebase.

- Review agent PRs with the same rigor as human PRs. The whole model depends on this. If you rubber-stamp agent output, you lose the safety net.

Related Reading

Claude Sonnet 5 Is Here. Opus 4.6 Just Dropped. What You Need to Know. →The AI model powering Continuous AI agents. Benchmarks, pricing, and what it means for developers.

The Pipeline Just Got a Brain

CI/CD automated the mechanical parts of software delivery. Build. Test. Deploy. Continuous AI adds the parts that required a human: analyze, decide, draft, respond.

The pattern is the same one showing up across the industry: agents propose, humans decide. The DORA State of DevOps reports have been tracking deployment frequency, lead time, change failure rate, and mean time to recovery for years. Background agents could improve lead time and deployment frequency without degrading the quality metrics, but only if the review step stays rigorous.

GitHub Continuous AI is early. The gh aw extension has 380 stars, not 38,000. The workflow format will probably change. But the direction is clear. The teams that learn to work with background agents will ship faster than those that stick with purely deterministic pipelines.

The CI/CD pipeline is not dead. It just got a colleague.